Java virtual Machine(JVM) and its architecture

In this tutorial, you will know about java virtual machine which made Java as more popular.

We all know that Java applications can be write once and run many times anywhere. This is possible with only JVM. Because Java was designed to run on a virtual machine. A virtual machine is software. This JVM will run on any kind of hardware. So it can be run anywhere.

When we compile java program .class file will be generated. JVM will execute .class file which contains byte code. And JVM is responsible for invoking the main method first where the execution will start.

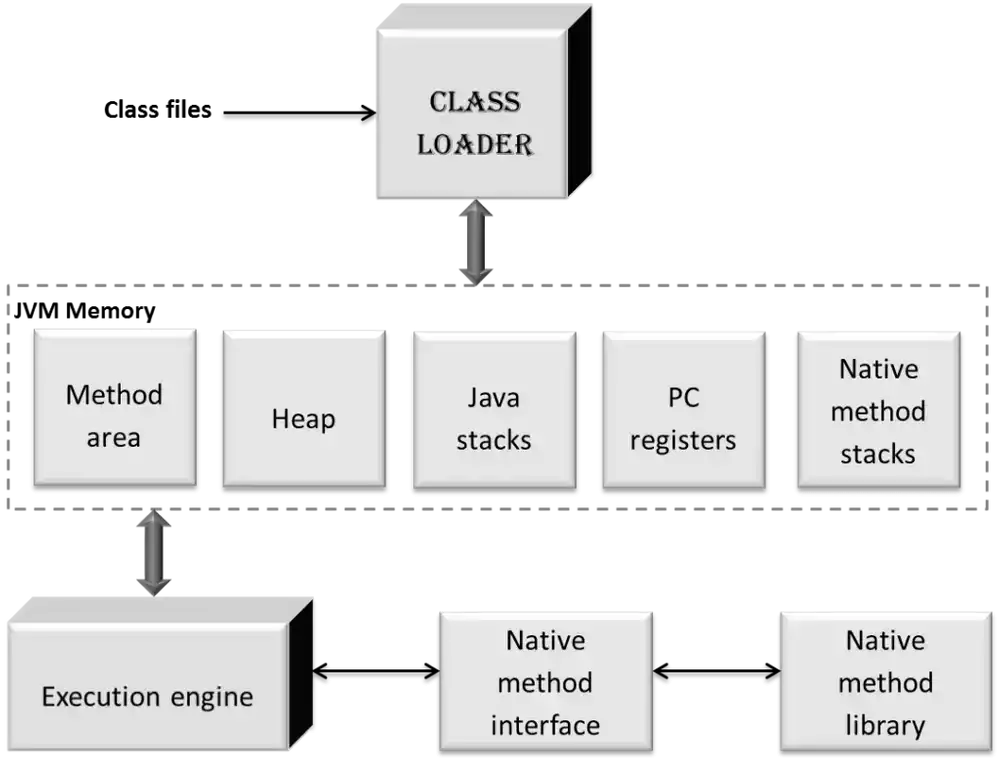

Now we will learn about JVM architecture. JVM architecture includes the main components which are required to run a program on a machine.

JVM Architecture diagram

Now we will see each part in detail

Class Loader

Class loader sub system is responsible for class loading, linking, and initialization.

Loading

here loader will search for the classes and load in order.

It will contain 3 parts:

- Bootstrap class loader: It loads classes that are related to java platform and the classes which are in bootstrap path which is present in rt.jar. Actually, rt.jar contains all compiled classes.

- Extension class loader: Here the classes which will use Java extension mechanism will be loaded. These classes will reside in extensions directory as .jar files.

- Application class loader: these classes are defined by users. These classes will be found by using class path variable.

After loading object is created for the .class file.It is used to represent memory in heap. And this object is used by the programmer to retrieve information.

Linking

Linking involves verification, preparation, Resolution.

- Verification it will verify whether the byte code is properly formatted that means the binary representation of the class has followed the structural constraints or not. If not followed then Verify Error must be thrown. This Error is sub class of LinkageError.

- Preparation In this stage static fields creation and initialization will be done. That means allocating memory for static variables and initialization of those. In initialization, only default values will be allocated.

- Resolution In this process symbolic references will be dynamically replaced with their direct references (concrete values).

Initialization

In this phase classes and interfaces will be initialized. This will be done by executing the initialization method of the class or interface.

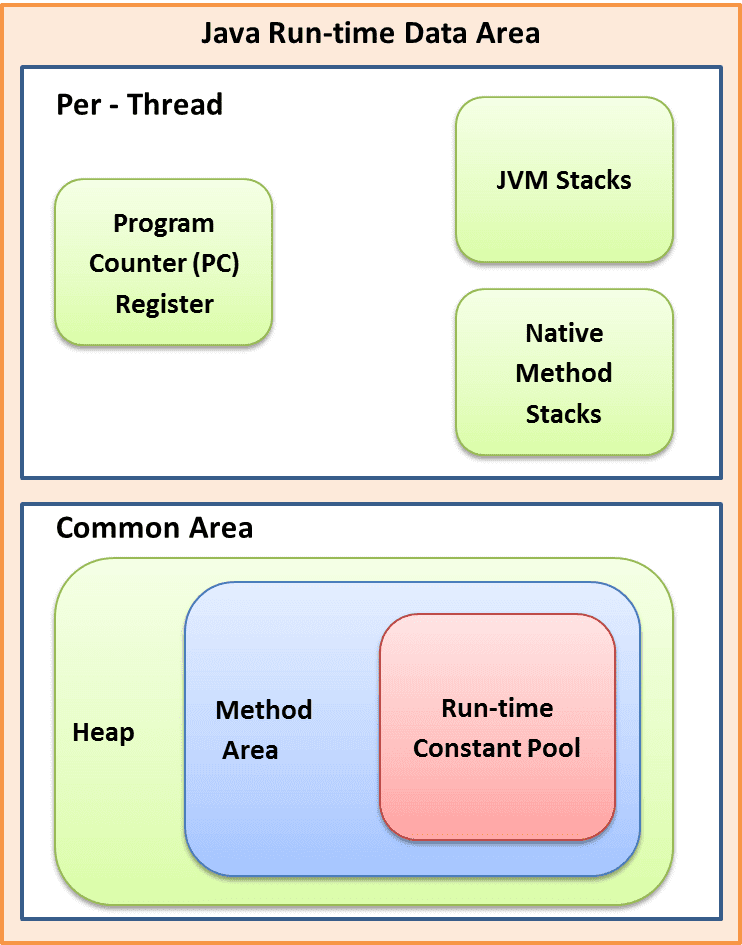

Runtime data areas (memory)

JVM organizes memory in several runtime data areas. For the execution of the program, these data areas are needed. Some memory will be shared among all the threads.

Now we will see these data areas in detail.

- Method area: This is logically a part heap space which will contain the class skeleton. It stores per class structures means run time constants and static variables, methods, constructors, class names and also class type information. It is a shared resource. Only one method area will reside in JVM. In Runtime constant pool string literals will be stored. Here literals don’t relate to any object instances. Run time constant pool doesn’t relate to object instances.

- Heap: Here information about objects will be stored. If we create object in heap space will be allocated. If object dies then memory garbage collected. It is common space shared among all threads.

- Stack area: It is not shared memory. Per every thread one run time stack will be created. It holds local variables, parameters, intermediate results and other data. It plays role in method invocation and return. JVM stores thread information in discrete frames. These frames will be stored in JVM stacks with push and pop operations. Here stack memory need not to be contiguous and it is dynamically expanded. If memory is not sufficient for expansion it will throw out of memory error.

- PC register: whenever new thread is created then it will get pc register. Pc register will store the address of current instruction to be executed.

- Native method stacks: native method stack will store native methods. This is also not a shared resource. Native method is java method but implementation will be another language mostly in c. these methods usually used to interface the system calls and libraries.

Execution engine

Execution engine will execute the java byte code that presents in run time data areas. Each byte code instruction contains opcode and operand. With the help of both execution engine will execute. Java Byte code should be changed to machine understandable. It should be done by compiler or interpreter.

Interpreter

It can interpret the byte code instructions line by line. It can interpret faster than compiler. But the repeated code should also be interpreted again. This is only the disadvantage about the interpreter.

JIT compiler(just In time)

it is mainly used for repeated codes. As this is the disadvantage of the interpreter to compensate that disadvantage this compiler came into the picture. The compiler takes more time than interpreter. But here JIT compiler will improve the performance. Actually, every method will be interpreted first. If call count of this method increases more than JIT threshold than that method will be compiled by JIT compiler. JIT compiler will translate byte code into native code. After that only native code will be executed only. That means JIT compiler will be invoked at the appropriate time and operated based on method frequency.

Java Native Interface

Java supports native codes through JNI interface. Native methods are useful for system calls and other issues related to memory management and performance issues. JNI is a framework that will be used to interface the native applications. It will enable java application to call and to be called by native applications.

Native Method Libraries

These native method libraries contain native methods. We will see some uses why we need to use native libraries.

Our system hardware may have some special capabilities. To use those special qualities for our application we need native methods.Some Native methods provide extra speed.

For memory management also some native methods will be designed.Usually, native methods will be written in c or c++.

That’s all about Java Virtual Machine(JVM).